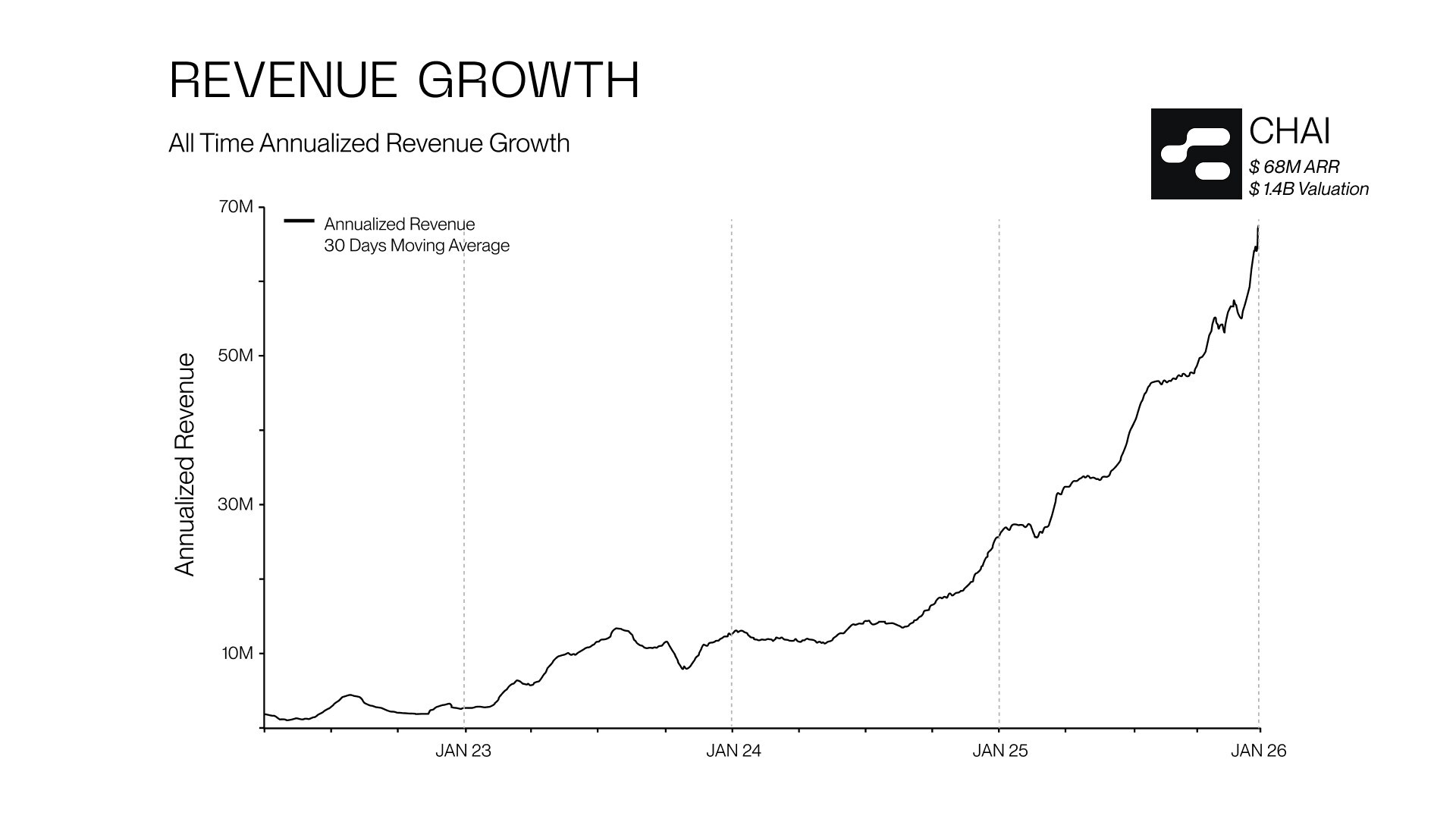

CHAI, a leading AI platform based in Palo Alto, California, has reported an impressive growth trajectory, achieving an annual recurring revenue (ARR) of $68 million and a valuation of $1.4 billion. This remarkable achievement is the result of a consistent threefold annual growth rate over the past three years. As the company continues to expand, it is also intensifying its commitment to safety and ethical standards in AI technology.

The rapid growth of CHAI comes with significant responsibilities, particularly in the context of evolving safety standards. The company acknowledges the challenges associated with scaling its operations while maintaining a secure environment for users. To address these concerns, CHAI has dedicated substantial resources to ensuring that its platform develops and manages AI systems that benefit humanity and promote the generation of safe content.

Commitment to AI Safety Standards

As part of its ongoing commitment to user safety, CHAI has implemented a range of protective measures. These initiatives aim to ensure that users are safeguarded against self-harm and that the platform complies with international standards. CHAI’s protocols align with the rigorous requirements set by the EU AI Act and the NIST AI Risk Management Framework. Additionally, the company adheres to guidelines established by the International Association for Suicide Prevention (IASP), which help protect vulnerable individuals during distressing circumstances.

To create a safer environment, CHAI employs a moderation system designed to filter out harmful content. This system enables the AI to serve as a supportive resource for individuals in crisis. Furthermore, the company is developing a real-time suicide and self-harm classifier that monitors active conversations, allowing for the early detection of potential suicidal ideation. By identifying unsafe behavior promptly, CHAI can activate safety mechanisms to intervene effectively and prevent escalation.

It’s important to note that while CHAI’s AI tools provide immediate support, they are not substitutes for professional medical advice. If users express thoughts of self-harm or suicide, the AI responds with compassion, directing them to appropriate resources, such as helplines and mental health professionals.

Operational Transparency and Future Directions

CHAI places a strong emphasis on operational transparency. User conversations are securely logged on private servers, and the company conducts thorough, anonymized reviews of these interactions to detect potential risks. This auditing process adheres to privacy protocols comparable to HIPAA standards, which allows CHAI to enhance platform safety without compromising user confidentiality.

The ongoing evolution of AI technology necessitates a robust safety framework. As CHAI’s user base expands, the company is committed to ensuring that its infrastructure can scale securely. The successful implementation of its safety measures demonstrates the company’s dedication to responsible and ethical AI practices.

In light of its recent achievements, CHAI is poised to set a precedent in the industry by prioritizing user safety and ethical technology use. The company plans to continue collaborating with safety experts and stakeholders to reinforce its commitment to protecting data and ensuring responsible AI behavior.

For further insights into CHAI’s safety methodology, readers can access the official paper titled “The Chai Platform’s AI Safety Framework,” available at this link.

Press inquiries can be directed to Tom Lu at +1 (626) 594-8966.